When starting a greenfield project, it’s easy to take advantage of the most modern development practices. An engineering team can create a new project in Azure or AWS and, with a few clicks, have it auto-deploying onto the team’s platform of choice in no time.

But what about the rest of us, who are working on codebases that are more than five minutes old? How do you take code that’s four years old and hundreds of thousands of source lines long and turn that into a lean, mean, continuous-deploying machine? How do you build a road map to go from a product that may take weeks or months to deploy out to customers into one that may deploy several times an hour?

And how do you ensure that your product that’s deploying several times an hour is doing so safely and sanely, and isn’t shoveling bad patch after bad patch onto your production system?

Start with an honest assessment

There’s more to the process than simply “go slow and iterate.” It starts with identifying clearly and honestly where your codebase and team are with regard to deployments and knowing where you want to go.

Not every project will be appropriate to continuously deploy to production, and that’s okay! Any step taken down the road to automate the build-and-deploy pipeline will pay dividends in overall developer productivity and code quality.

But what are those dividends? Why does continuous delivery matter? In their book, Accelerate: The Science of Lean Software and DevOps: Building and Scaling High Performing Technology Organizations, Nicole Forsgren, Jez Humble, and Gene Kim elaborate on several studies they have carried out over the last few years.

They tracked performance metrics ranging from company success to employee retention across organizations with varying levels of continuous delivery practices. Among the tangible benefits that were statistically significant, they found specifically that high-performing organizations:

- Had a 50% advantage in market capitalization growth over three years when compared to low-performing organizations

- Spent 50% less time remediating security issues

- Were twice as likely to be recommended as a great place to work by their employees

Then what does it mean to be a high-performing organization? And how does a low-performing organization transition to a high-performing one?

The four pillars of continuous capabilities

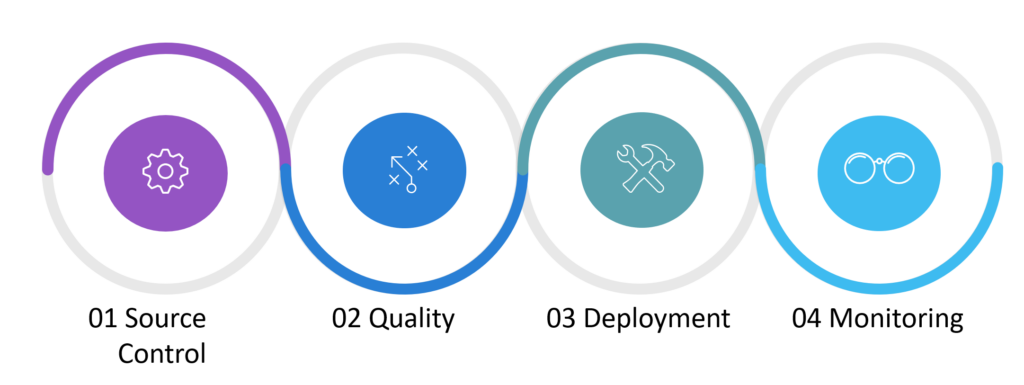

To achieve full continuous delivery, you need four main pillars of capabilities. Each has its own distinct stages.

Source control

Does the entire team use source control? Do they have practices that are conducive to continuous delivery, such as GitHub Flow or trunk-based development?

Software quality

Are there automated tests? Do they pass reliably? Do they extend beyond simple unit tests and into the more specialized testing areas? Can you run them at will?

Deployment

Are production builds created and deployed entirely by automation? Is there infrastructure as code to allow predictability and maintainability in the deployment architecture? Can you deploy services individually, or does everything deploy as a monolith?

Monitoring

Do you collect logs? Do you collect metrics? Are they set up to alert for failures? Do you have traceability for failures across the entire stack?

It’s important to know not just what stage your project currently is in, but what it means to be in that stage. At each point, significantly different types of work are needed to advance to the next stage in the process, and each stage is correlated with a reasonable delivery strategy along the spectrum, from fully manual to fully automated.

Similarly, it doesn’t make sense to tackle the work from a far-future stage if you have not yet cleared all the requirements for the next one. For example, there’s not much benefit to tracking your UI code coverage metrics across builds if you’re not even reliably running your unit tests during those builds.

This is very much a new-and-evolving field of software engineering. Processes that were previously imaginable at only the very largest and most engineering-wealthy companies, such as Google and Twitter, are now achievable for those of us in small and medium-sized organizations as well.

How the practices break down

The maturity of each practice breaks down into four stages, where a 1 indicates a relatively immature practice and a 4 indicates an extremely mature one that’s based on currently available technology.

Source control practices

- A source control system might exist. Maybe. Probably.

- A source control system definitely exists but makes inefficient use of branches—either too many with deeply nested levels, or too few. Merges might be a complex and fraught practice.

- Developers generally use a current source control system and follow a defined practice, such as GitHub Flow. Branches probably live for one week on average, and merges are medium-to-large.

- All developers practice main trunk-based development with frequent, easily digestible commits to master—perhaps even daily.

Quality practices

- Very few automated tests, and definitely no automated test runs. Not all tests pass. The most common refrain is, “It works on my machine!”

- Automated unit tests exist and are run with every merge to master and are generally reliable. Integration/service tests may exist but are probably manual or else small in number.

- Test metrics such as code quality are routinely collected and tracked, and are used as gates to merging commits. Integration/service tests are automated and reliable. Specialty tests exist but are probably run manually.

- Specialty tests such as performance, security, etc. are run automatically. All test passes are automatic and can run on demand for any change at any state in development.

Deployment practices

- Builds are sometimes created and deployed from a developer’s machine and pushed into production.

- Builds are created from an automated build system and are deployed from within the automated system. There may be some manual steps, but they are of extremely limited scope and count.

- Components are built automatically and can be deployed independently from the whole. There are no manual steps in a deployment beyond pushing a button.

- Everything is containerized. Developers can run a release build as easily on their machine as they could in production.

Monitoring practices

- Logs exist on individual servers but aren’t aggregated in any way. Tracing issues is a problem.

- Logs are semi-structured at least, and are centrally aggregated for searching across machines and environments

- Metrics/counters exist to answer aggregate questions about system health and to supplement server logs providing deep dives on individual issues.

- Full observability achieved; logs are well-structured and appropriately leveled. Counters are standardized. It is possible to readily trace a request across many different parts of a system.

Putting it all together

To build a reasonable road map, an organization must first determine at what stage it is currently operating. Do an honest survey of the displayed capabilities for each of the four pillars to determine which level you’re at.

Figure 2: When the four stages of each of the pillars are laid out together, it’s easy to see what delivery stage is appropriate for each aggregate of capabilities.

Ideally, your team will be evenly spread across all capabilities. Practically speaking, however, most organizations will have an uneven spread. Perhaps they are heavily invested in their quality technology—a 3—but have put very little effort into monitoring—a 1.

The team must then decide where it would like to go. It is neither appropriate nor necessary for every software organization to achieve full, continuous, automated deployment, with every change releasing immediately to production. Many organizations will be satisfied with level 3 functionality, where it is straightforward and routine for someone to “push the button” to ship a change or set of changes live.

Once your team knows where it is and where it would like to go, it can determine what it needs to accomplish to arrive at that destination. Consider the team in the previous example. It has determined that a push-button deployment is an appropriate goal, given stakeholders’ concerns and the investment they are willing to make (delivery stage 3). Assume they have self-assessed to the following scores:

With its current scores, the team can appropriately deliver software at stage 2 (early automation). If it is not currently delivering that way, then with no additional improvements upon the pillars, it would be safe for the team to implement up to this level. If it wishes to move on to push-button deployments, however, the course of action is clear.

The most bang for the buck tends to come from moving from level 1 to level 2 capability. Thus, the team should begin by improving its monitoring practices. The team would get relatively little additional utility for choosing instead to, say, improve source control practices (from level 3 to 4).

Step back to move forward

If your organization is still early in its continuous delivery journey, deciding where to begin can seem like a daunting task. But if you take a step back and evaluate both your current capabilities and how much investment you’re willing to make, you can build a road map that will get you, step by step, to whatever destination you desire.

Primary source: techbeacon.com